my close friend & colleague Michael Smith asked me

Question for you: In terms of Donald Hoffman’s interface interpretation thing, have you found a way to suss out how different someone else’s interface really is? Like, a way around the freshman philosophy problem of “Do you experience what I call ‘red’ as what I’d call ‘blue’, but you just call it ‘red’ too?” But deeper. Like, I wonder whether “thing” and “other” and “space” are coded radically differently between people. I’d expect that your perspective-taking practices might have hit on something there. So I’m curious.

The short answer is pretty well-articulated by @yashkaf here, but of course we can do a longer answer as well!

My overall sense is that first order human perception is in some important sense pretty similar, although of course blind people are in a very different world. This is what allows us to maintain the illusion that it’s NOT all an interface.

Yet simultaneously, our experiences of everything are radically, radically different to a degree that is hard to fathom. Hoffman completely dissolves “Do you experience what I call ‘red’ as what I’d call ‘blue’, but you just call it ‘red’ too?” There is never a “is your red my red?” in the abstract. That’s like asking “is this apple that apple?” like uhh no they are different apples.

And thus in some ways, my red actually has more in common with my own blue than it does with your red. Both of my colors are entirely composed of all of my own experiences.

However, of course, your and my “red” are more compatible than my “red” and “blue”, for many reasons that are obvious but I’ll say them anyway:

All of which would lead us to create compatible or commensurate interfaces with red, such that we don’t encounter many differences there when we go to talk about it. And the word “red” is an interface we share for referring to this pattern.

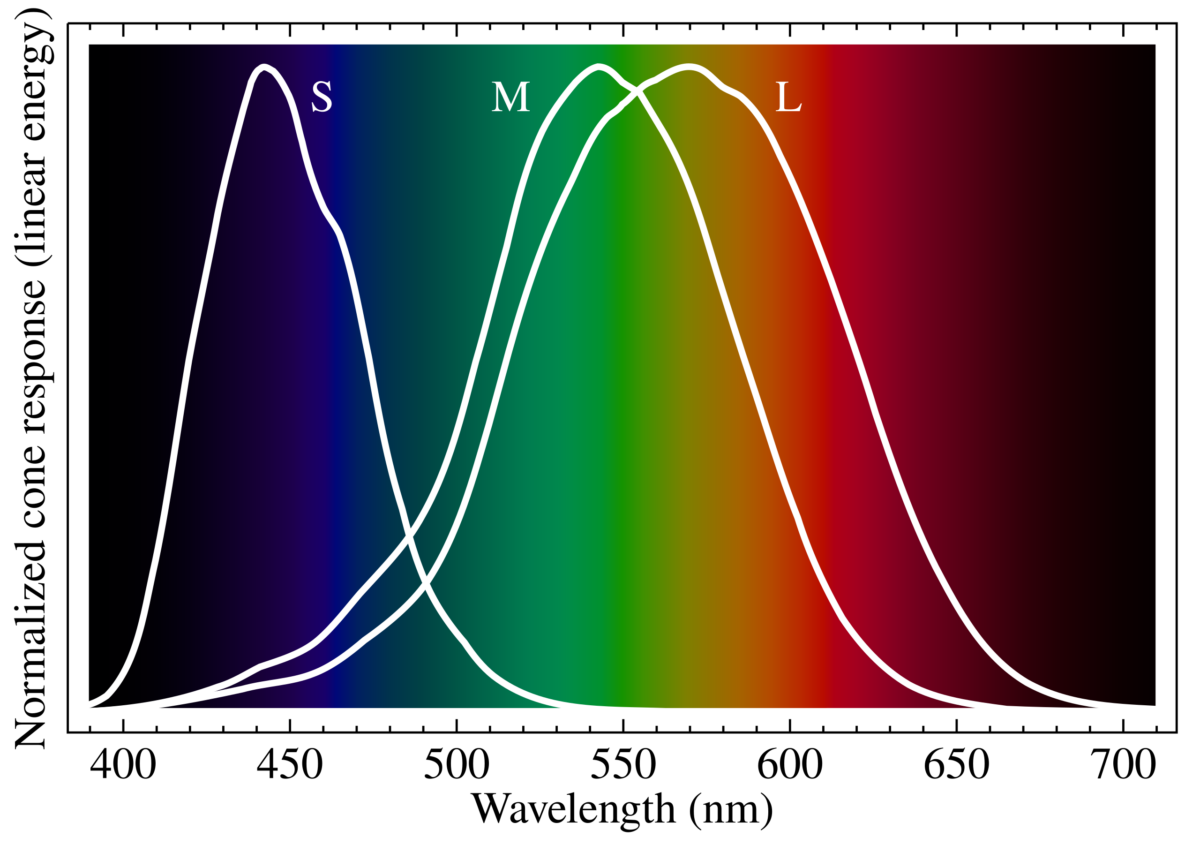

For what it’s worth I suspect actually that there are real subtle interfacial consequences to things like the fact that red is higher wavelength (lower energy) and for humans the fact that our red & green cone cells are much closer to each other than either is to blue (rods are between blue and green). I don’t know what the consequences are, but in an important sense it must matter. A tetrachromat (with a fourth kind of cell) would experience most colors quite differently. And of course colorblind people do.

However, the question of similarity or compatibility has an implicit context. You can do that with the apples too: are these two apples the same apple? Well in some sense of course they can be, if we know what we’re doing. They can be fungible or equivalent or undifferentiated, for some purpose.

And of course there are many ways in which our interfaces with the color red may be incompatible. When I try to point at these, they seem symbolic, somehow separate from the level of perception.

If you’re American and I’m Canadian (which I am) then you might be surprised to discover that for me, red is associated with the political “left wing” and blue with the political “right wing” (I’ll caveat that these concepts are themselves ever-shifting coalitions, not very natural categories). In fact, my way is more common in the rest of the world (and historical America); the phrase “red states and blue states” originated in 2000 with one particular TV announcer’s choice of colors (from the american flag). So is my red your blue? In this sense, yes!

And this is helpful to talk about color because it’s true for basically everything you could possibly want to talk about, and color perception is the MOST basic aspect of reality you can get, or close to it.

Which is maybe why the philosophers use it in this thought experiments!

Part of why Hoffman is so radical is that he highlights that it’s actually something-like-meaningless to say “my interface with Y and your interface with Y are more alike than those interfaces are with Carol’s”. They can’t be alike or unalike; they can’t be compared; they’re made out of different stuff! Mine are made out of my experiences and yours are made out of yours. That’s all in terms of the subjective interface, that is—you can obviously talk about our behaviors having something in common.

The actual question is ITSELF about interfaces again. Not “do you and I see this the same way?”—we don’t. Full stop. Next question.

And the next question is “do we want to describe how we see it, the same way?” on a propositional level.

Or “is my interface with Y able to interface with your interface with Y?” on more of a participatory level.

And it might be that we can effectively dance together, even though we have radically different descriptions of what dance is or what it means to us or how it works.

Having said that… there’s still clearly obviously a thing that we mean when we talk about difference, or perhaps distance. While writing this up, I intuitively used the metaphor of “how far apart people are” and this is actually a different metaphor than similarity, although we often use distance as a metaphor FOR similarity. And maybe we’ll also talk about what lies in that distance—is it a smooth pathway or a complex dance?

Ah yeah, it’s like, how complicated is the transformation we need to do to my interface in order to turn it into your interface? And in some super simple cases that transformation is basically a null operation except for the “entirely composed of your experience, rather than entirely composed of mine”, but they share the same structure and they sit in each of us in a similar way, so we round them to “same”.

In particular, mathematical objects can be extremely like this (though not as much as people might assume). Also if we have a shared experience of something happening to us both together, that can become a reference point (assuming we experienced it “much the same way”).

So let’s try that.

have you found a way to suss out how

differentfar away someone else’s interface really is?

There’s a kind of vast vector space here, and again it depends a bunch on context.

Like if there’s certain kinds of things at stake, and low trust, it can be very hard to get people to agree with boring statements that they would other times themselves utter as axiomatic premises (eg “we live in a society” or “humans are a kind of animal”) because they don’t want to allow the other person to set the frame and then force them using logic into accepting some conclusion they disagree with.

In general, one of the things I keep an eye out for is if there’s something where it’s easy for me to express it to Alice and hard for me to express to Bob. that’s a sign that me and Bob have a big weird gap/chasm/shear—in relation to that topic or knowing, not necessarily “in general”.

Interestingly I suspect it’s possible (tho not super common) that Bob could ALSO express relevant things to Alice that he can’t express to me.

This could be because Alice has a viewpoint that is a deep synthesis of mine and Bob’s. [depth perception metaphor]

More commonly, Alice has the ability to take either of our perspectives at a given time, but not both. So she can step into one frame or the other, and resonate with what we’re saying. and both of those are sort of workable ways of seeing things for her.

Whereas if I were to try to see things Bob’s way, or vice versa, it would produce some major discomfort for me because it would seem to violate something I know about the world.

It could be that Alice does not actually have that additional knowing, and that’s why she’s able to hear us, or it could be that she has that knowing and also has some additional knowing that makes it not-an-issue.

Important to track here is a principle I have which is something like “everybody contains explanations of literally everything they have ever experienced. Necessarily this involves making a bunch of absurd generalizations”.

But people can have very compartmentalized explanations, where they can’t actually simultaneously explain X and Y, and if you get them to try they get distracted or flustered or angry.

This is kind of developmental stuff I guess also, except it doesn’t necessarily map onto any common ladder like Kegan stages.

As for…

Like, I wonder whether “thing” and “other” and “space” are coded radically differently between people.

This seems very very true to me, not just of the words but of what we would consider the referent.

[this post is half-baked and I didn’t finish this part and as of the moment I’m publishing it I’m not even sure what exactly I was gonna get at here]

As someone currently experiencing substantial amounts of collective intelligence on Twitter, here’s some of what I’m seeing as the emerging edge of new behaviors and culture, and one bottleneck on our capacity to think together and make sense of the world.

Some of us are pioneering a new experience of Twitter that’s amazing, and that wouldn’t be possible on any other platform that exists today.

Conversation is thinking together.

Collective intelligence is, at its core, good conversation.

Many people, on and off Twitter, think of it as a shouting fest, and parts of it are. And… at the same time, on the same app, with the same features but some different cultural assumptions, there are pockets where people are meeting the others, making scientific progress, falling in love, healing their trauma, starting businesses together, and sharing their learning processes with each other.

Those sorts of metrics—as hard to measure as they are—form a kind of north star for Twitter. This creature has the potential to be the best dating app (for some people) and a way better place for finding your dream job than LinkedIn (for many people). And so on.

Cities have increased creativity & innovation per capita per capita, ie when you add more people each person becomes more, because more people & ideas can bump into each other. The internet is a giant city, and this is far more true on Twitter than any other platform, particularly because of how tightly it allows the interlinking of ideas with Quote Tweets.

Twitter is very much about “what’s happening [now]” but, as the world has been collectively realizing over the past decade, simply knowing “what’s happening” in some isolated way is meaningless and disorienting. Meaning comes from filtering & distilling & contextualizing what’s happening, and this is part of what Twitter is already so brilliant for, because everyone can talk to everyone and the ultra-short-form non-editable medium encourages you to tweet today’s thoughts today rather than drafting them today, editing them tomorrow, then scheduling them for next week’s newsletter.

When someone makes a quote-tweet, they’re essentially saying “I have some thoughts I’d like to share, that relate to the tweet here”. This might be a critique of the quoted tweet/thread, or it might be using the quoted material as a sort of footnote of supportive evidence or further reading or ironic contrast. This meta-commentary is very powerful, whether it’s used by someone reflected “I think what I really meant to say here was” or someone framing a thread they just read as an answer to a particular question they and their followers might care about.

Currently, however, it’s impossible to QT two or more tweets at once. This means that in the natural ontology of Twitter, there is no way to properly compare or contrast or relate different thoughts.

This contributes, I think, to the fragmented & divergent quality of thinking on Twitter: the structure of the app makes it hard to express convergent thoughts. You can use screenshots… but then all context & interlinking & copy-pastability is destroyed. You can have a meta-thread that pulls a bunch of things together… but each tweet in that thread is still only referencing one other tweet, so there’s no single utterance that performs the act of relating other utterances.

The amount of utterances that need to connect two other pre-existing utterances is huge. Thoughts shaped like:

Similarly to how the #hashtag & @-mentions evolved from user behavior, and the Retweet functionality evolved out of people copying others tweets and tweeting them out with “RT @username: ” at the start, and Quote Tweeting evolved out of people pasting a link to another tweet within their tweet… MultiQT is a natural evolution of the “screenshot of multiple tweets” and “linking tweets together as a train of thought using multiple QTs in a thread” behaviors.

I didn’t even realize quite how much I’d want this until I started mocking up the screenshots below by messing with the html in the tweet composer and being so sad I couldn’t just hit “Send Tweet”. I can already tell that like @-mentions and RTs, once we’re used to this it’ll feel absurd to think we ever lived without it.

» read the rest of this entry »There are a lot of interfaces that irk me, not because they’re poorly designed in general, but because they don’t interface well with my brain. In particular, they don’t interface well with the speed of brains. The best interfaces become extensions of your body. You gain the same direct control over them that you have over your fingertips, your eyes, your tongue in forming words.

This essay comes in two parts: (1) why this is an issue and (2) advice on how to make the best of what we’ve got.

One thing that characterizes your control over your body is that it (usually) has very, very good feedback. Probably a bunch of kinds you don’t even realize exists. Consider that your muscles don’t actually know anything about location, but simply exerting a pulling force. If all of the information you had were your senses of sight and touch-against-skin, and the ability to control those pulling forces, it would be really hard to control your body. But fortunately, you also have proprioception, the sense that lets you know where your body is, even if your eyes are shut and nothing is touching. For example, close your eyes and try to bring your finger to about 2cm (an inch) from your nose. It’s trivially easy.

One more example that I love and then I’ll move on. Compensatory eye movements. Focus your gaze at something at least two feet away, then bobble your head around. Tried it? Your brain has sophisticated systems (approximating calculus that most engineering students would struggle with) that move your eyes exactly opposite to your head, so that whatever you’re looking at remains in the center of your gaze and really quite incredibly stable even while you flail your head. This blew my mind when I first realized it.

The result of all of these control systems is that our bodies kind of just do what we tell them to. As I type this, I don’t have to be constantly monitoring whether my arms are exerting enough force to stay levitated above my keyboard. I just will them to be there. It’s beyond easy―it’s effortless.

Now, try willing your phone to call your friend. You’re allowed to communicate your will using your voice, your hands, whatever. Why does it take so many steps, or so much waiting?

A short reflection on two even shorter words.

The other day, I was reading the details of various phone services while logged into my carrier’s website. I came across a section that read:

Long distance charges apply if you don’t have an unlimited nationwide feature.

…so I’m like “Wait? Do I have an unlimited nationwide feature?” and it occurs to me that there was no reason for them to use the word “if” there. I’m logged in! Their system knows the answer to the if question and should simply provide the result instead of forcing me to figure out if I qualify.

In some cases, of course, it might be valuable to let the user know that the result hinges on the state of things, but there’s an alternative to “if”. It’s called “since”. So that page, instead of what it said, should have been something more like:

Long distance charges would apply, but they don’t since you have an unlimited nationwide feature.

or

Long distance charges apply since you don’t have an unlimited nationwide feature. Upgrade now

I was initially going to just talk about software, but this actually applies to any kind of service, including one made of flesh and smiles. The keystone of service is anticipation. A good system will anticipate what the user needs/wants and will provide it as available. This means not saying “if” when the if statement in question can be evaluated by the server (machine or human) instead.

Framing is important. There are many other examples of this (in fact, I’m in the process of compiling a list of helpful ways to reframe things) but here’s a simple one. It relates to the word “but”. Specifically, to the order of the two clauses attached to the “but”. The example that prompted me to jot this idea down was deciding which of the following to write in my journal:

As is readily apparent, the second part becomes the dominant or conclusive statement as it gets the final word against the first statement. In this case, I opted in the end to use the former option, because it affirms the value of reading the book rather than suggesting it’s not worth it in the long run. The book in question is a now-finished serial ebook called The Surprising Life and Death of Diggory Franklin, and the sentences above should give you an adequate warning/recommendation not to read it.

This bit about the buts is obvious in hindsight, but I found that laying it out explicitly like this helped me start noticing it a lot more and therefore reframing both my thoughts and my communication.

Say you want to express to a cook both your enjoyment of a meal and your surprise at its spiciness, there are several options:

…but, maybe the extra spiciness didn’t detract from the enjoyment. In that case, a better conjunction would be “and”. Again, like before, this sounds obvious, but once consciously aware of it I started catching myself saying “but” in places that didn’t adequately capture what I wanted to say or in some cases were rude. The chef remark above has the potential to be rude, for example.

If you want to add to the reframing list, comment below or shoot me an email at malcolm@[thisdomain].

This mockup of what drawing was like on my touchscreen shows clearly which parts of the screen don't work.

I’ve been the owner of an Android phone (HTC Incredible S) for 9 months now, but today I sent it off to get serviced because the touchscreen has been acting up. I first noticed the touchscreen behaving strangely this fall, when horizontal bands of the screen would sometimes be unresponsive. On the right, in a mockup of a drawing app, you can see how poking the screen produced no dots on the band, and so on. This was even more annoying when trying to type, because the bottom band passed right through the home-row (if you can still call it that on a touchscreen) of the keyboard.

Anyway, at first it would just do this for a few minutes every day, but then it started to act up like this consistently. The problems got progressively worse until by mid-January I could never be certain at any given moment that I’d be able to use my phone at all. Furthermore, touch events started happening in the wrong places—I would try to select “Yes” and the screen would select “No”, or taps would become long presses. Sometimes the phone would seem to think I had touched somewhere on the screen when it was sitting a foot away on my desk, and would navigate interfaces on its own.

I think this is a terrible analogy, because I think EQ is easier to assess on the surface than IQ is. Anyway, I didn't make the image.

Emotional intelligence (hereafter EI, though often called EQ like IQ) is a term that is used to describe one’s ability to perceive others’ emotions and response appropriately in social situations. As the image here from Psychology Today illustrates, EQ is ascribed a fair amount of importance. The same concept is also embodied in maxims like “It’s not what you know, but who you know”.

So if we were to map the concept of IQ to technology, it could refer to a number of things, but at the forefront is processing power and efficiency/effectiveness of algorithms. Of additional consideration is the ability to learn new things, which is likely where the concept of a smartphone comes from: in addition to built-in phone features like SMS and alarms, smartphones can play games, interact with social networks, and let us draw, to mention just some of the hundreds of thousands of apps out there.

How, then, would we map EI, or EQ? Emotional intelligence, for a computer or smartphone, or any piece of software, is its interface. A piece of software has good EI if it responds the way you expect it to, and even better EI if it anticipates your needs and makes it easy to accomplish your goals. When our technology does this, we adore it, and when it fails to do so, we abhor it.

However, this feeling of dislike can actually go further than just general annoyance or frustration at an inability to properly perform a task using some interface. I realized this rather profoundly with my defective smartphone when I had been trying in vain for probably five minutes to do something really simple like call someone. I was completely unable to navigate the interface because the screen would constantly press other places or simply refuse to push where I wanted. How do you think I felt? I’m actually going to give you space to guess. Think of an adjective that you would expect to most accurately describe my feelings at that point.

Did you say frustrated? Angry? Disgusted? Resentful? Those are all true, but that’s not exactly right. When I couldn’t use the interface, I felt hurt. It sounds odd, but I had an emotional response in my chest that I’ve recognized as the one I feel when someone is being cruel to me (I was bullied a bit when I was younger). It was probably the second time I had this response that I realized how strange that was. After all, at no point in this process had anyone set out to hurt me. Why did I feel like my phone was being mean to me?

After some reflection, I concluded that how I really felt was misunderstood. I was trying to communicate with my phone, via its touchscreen interface (which was designed for human fingers) and it seemed to be completely misunderstanding my instructions and ignoring them or vehemently disobeying them. I had unwittingly personified my phone to a huge extent, so it really hurt when I felt like it was ignoring me while I was going out of my way to communicate with it (eg. turning the phone to put UI elements in different places so I could access them). This would be like asking someone close to you (smartphones are companions) for help and having them plug their ears, sing, and then do something random that might be slightly related to what you were asking. They’d be taunting you.

I’m a designer, a hacker, and an engineering student, so I make things with interfaces. In fact, I’m quite passionate about user experience (UX) and interface design. While my phone’s flaky touchscreen was obviously not intentional, I believe that what I’ve learned here apply into conscious interface design as well. This kind of revelation is less of a “how” than a “why”. That is, prior to these experiences, I had had no idea that interfaces could cause such an emotional impact.

When my smartphone became stupid, it didn’t lose processing power. It simply lost the ability to communicate with me, and that felt far worse than I could have possibly expected. I’m going to remember this every time I design an interface.